Somewhere between the demo and the boardroom, most intelligentization initiatives quietly disappear. Not because the technology failed. Because the organization around it was not ready to do anything with what it learned.

That gap – between a pilot that worked and a product that shipped – is where billions in AI investment go dark every year. Closing it is not a matter of running better experiments. It is a matter of building the strategic and operational conditions that make those experiments mean something.

This article is about how to do that. What follows covers the framework behind it: how to structure the validation phase that produces decisions, what makes AI transformation stick at the organizational level, and what the transition from AI pilot to production actually demands.

Table of Contents

Key Takeaways

- An AI pilot is a validation tool, not a delivery commitment – its job is to reduce uncertainty before a larger investment, not to replace one.

- Most intelligetization initiatives fail not because of the technology but due to weak discovery, undefined success metrics, absent ownership, and no clear path to production.

- Effective AI transformation starts with a company’s data landscape and governance structure, not with selecting tools or automating the first visible workflow.

- Companies with successful AI pilot scale treat it as a coordinated changeover program, not a collection of isolated experiments – each initiative feeds into the next.

- Moving from pilot to production AI requires organizational readiness: defined ownership, scalable architecture, change management, and MLOps discipline.

What an AI Pilot Actually Is and Where It Belongs

An AI pilot is a limited, controlled initiative used to test whether a specific idea is worth developing further. It is not a full product or enterprise-wide transformation. Its job is to help a company answer a few practical questions before a bigger investment happens:

- Can this use case work with our data?

- Will people actually use it?

- Does it solve a real business problem?

- What could block it later – integration, compliance, security, cost, or ownership?

That is where a pilot adds value. It gives teams something more useful than assumptions, without forcing them into full-scale delivery too soon.

What is an AI pilot really for?

At its best, a pilot is a decision stage. It helps a business validate whether an idea should be scaled, refined, reframed, or stopped. That matters more than many companies expect. It’s not there to impress stakeholders with a flashy demo. Its job is to create enough evidence to support a smart next step.

Why do companies get AI pilots wrong?

The confusion usually starts with expectations. Some businesses treat it like a mini production launch. Others treat it like proof that the whole AI direction is correct. Both assumptions create problems.

A pilot can help you:

- validate a use case;

- test assumptions with real inputs;

- surface risks early;

- clarify whether the idea deserves more investment.

But it cannot replace:

- AI governance;

- change management;

- workflow redesign;

- adoption planning;

- production ownership;

- long-term monitoring.

So yes, a pilot can prove something important. But it cannot carry the full weight of transformation on its own.

When does an AI pilot make sense?

A pilot is usually the right move when the use case is still uncertain – when the workflow is new or high-risk, data quality is unclear, business value is still a hypothesis, the team is unsure how users will respond, or legal, security, and integration concerns may affect feasibility.

In these cases, going straight into full delivery can be the more expensive mistake. That connects directly with a point from Olga Hrom, our Director of Pre-Sales Strategy & Delivery:

Failed AI pilots should not automatically be treated as a bad outcome. In many cases, they are part of the learning process that helps companies avoid bigger mistakes later

The real failure is spending months building the wrong thing because no one tested the assumptions early enough.

When is a pilot not necessary?

Not every AI initiative needs one. If the company is implementing a low-risk, well-understood capability with clear requirements and proven patterns, a pilot may only slow things down. The better question is not: “Should we always run a pilot?” It is: “Is uncertainty high enough that validation is more valuable than speed?”

That distinction matters. Without it, companies end up piloting obvious things and overcommitting to uncertain ones.

So where does an AI pilot belong? Right in the middle – after initial strategic thinking, but before serious production investment. Between idea, validation, and scale. Used properly, it is not a dead end. It is a starting point: a disciplined way to learn when AI operationalization is required, what needs to change, and what should not move forward at all.

How Synapora Approaches an AI Pilot

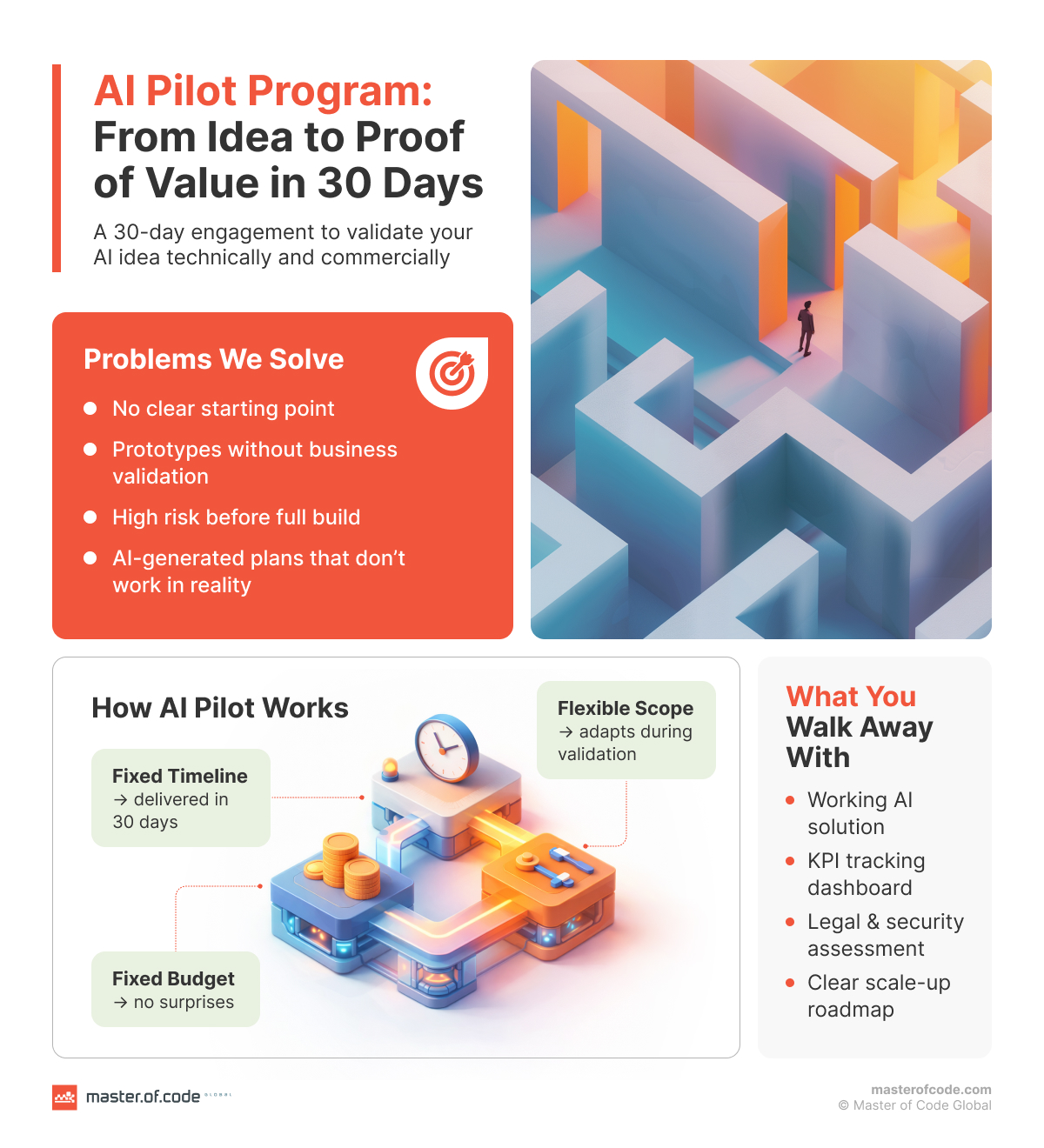

Our AI pilot services run in two phases: Discovery and a Proof of Value build, within a fixed budget and fixed timeline of 30 days. What stays flexible is the technical approach. With intelligentization projects, requirements may shift once a team actually digs into the data and systems. The FFF model – Fixed Budget, Fixed Timeline, Flexible Scope – gives clients predictability without locking the work into a scope that stops serving the actual goal.

Discovery covers more ground than clients usually expect: tech fit, legal exposure, security and compliance, data infrastructure, and business case validation. It is also where AI-generated BRDs get pressure-tested. Most clients arrive with requirements documents built in ChatGPT or Claude, which is genuinely useful – it compresses early thinking and forces structure. But it is not a final scope. As Olga Hrom puts it: “We add a human expert layer on top of AI-generated inputs. That is where the real value of Discovery sits.”

The build phase produces a Proof of Value, not an AI Proof of Concept. The difference matters. A PoC validates technical feasibility. A PoV validates whether the solution hits actual KPIs – measured by analytics instrumented into the working solution from day one.

Every pilot is delivered by a cross-functional team of four to five people: a Project Manager, a Solution Lead, AI Developers and Engineers, and Domain SMEs – which may include an AI-CX expert, a data engineer, or a legal and security consultant depending on the case. Specialists are brought in at the stages where their input matters most.

At the end of 30 days, you walk away with:

- a working solution tested against defined success criteria – adoption rate, time saved, task completion, or whatever metric matters to your case;

- a roadmap for AI Pilot scaling with the architecture and next-phase scope already defined, so the decision about whether to move into AI MVP development or a full-scale product build is based on evidence.

How the Market Typically Approaches AI Implementation and Why So Many Initiatives Stall

Artificial intelligence adoption is no longer the challenge. McKinsey’s report found that 88% of companies now use AI regularly in at least one business function. The challenge is everything that comes after the first experiment.

Almost all companies invest in AI, but just 1% believe they are at maturity. Nearly two-thirds of organizations have not yet begun scaling AI in business. And the gap between experimentation and operational value is widening, not closing. MIT’s NANDA initiative, drawing on 150 executive interviews and analysis of 300 public deployments, found that about 5% of pilot programs achieve rapid revenue acceleration – the vast majority stall, delivering little to no measurable impact on P&L.

This is not primarily a technology problem. The models work. The tooling has improved dramatically. What fails is the organizational and strategic layer around the tech.

Where Most Implementations Break Down

The pattern is consistent across industries and company sizes. Initiatives tend to stall at one of several predictable points:

- Discovery produces documents, not decisions. Many engagements end with a strategy report or a requirements document. The client has artifacts but no working validation of whether the idea is sound, feasible, or worth the next investment. As Olga Hrom puts it: “Standalone Discovery is no longer valuable on its own. Clients need something they can test, show to users, and employ to make a real decision.”

- Proof of concept validates technology, not business value. A prototype that works in a controlled environment tells you the model can perform a task. It does not tell you whether people will adopt it, whether it integrates cleanly into existing workflows, or whether it moves the metrics that matter. IDC research found that 88% of observed PoCs never make it to wide-scale deployment – for every 33 a company launches, only four graduate to production.

- Pilots launch without a defined next owner. Even well-executed pilots frequently stall after the engagement ends. No one is formally responsible for the transition to production. Sponsorship fades. The architecture is not documented for scale. The team that built it moves on.

- AI governance and compliance are left until last. Security reviews, data ownership questions, and legal compliance are treated as late-stage gates rather than early-stage inputs. By the time they surface, they either block the work entirely or force expensive rework.

- Success is never defined upfront. Industry analysis consistently identifies unclear success metrics as one of the leading drivers of project failure – teams that define expectations and AI ROI upfront avoid this trap and deliver sustained value.

The Result

A March 2026 survey of 650 enterprise AI leaders found that 78% of businesses have at least one pilot running, but only 14% have successfully scaled an agent to organization-wide operational use. The gap is not primarily a technology problem – most lack the evaluation infrastructure, monitoring tooling, and dedicated ownership structures needed to move a promising pilot into reliable production.

The organizations that close that gap are not necessarily running more experiments. They are running better-structured ones, with governance built in from day one, business validation baked into the build, and a clear path to production defined before anything gets built.

The Framework That Actually Supports AI-Driven Transformation

Most organizations begin their journey by looking for something to automate. They pick a workflow, select a tool, and build an agent to handle it. That approach works for trials. It does not work for transformation.

The fundamental problem is sequencing. When you start with automation before you understand the data and system landscape underneath it, you are building on an unstable foundation. Agents begin to hallucinate. Outputs become inconsistent. Teams spend more time correcting AI behavior than benefiting from it. The initiative stalls – not because the technology failed, but because the groundwork was never laid.

Start with Data, not Tools

As Dmytro Hrytsenko, our CEO, puts it:

Every company is governed by its data. Before you can build anything reliable on top of AI, you need to understand what data exists, where it lives, and how it connects.

In most organizations, that data is scattered. CRM records in one system. Project documentation in another. Legal files somewhere else. Some of it in the cloud, some on-premise, structured and unstructured mixed together. That fragmentation is not a failure – it is simply how companies grow. But it becomes a serious blocker the moment you try to build AI agents that need to draw on all of it coherently.

A transformation-ready approach addresses this before anything else:

- Map the data landscape – identify all sources across the organization, understand their structure, governance rules, and ownership;

- Build a unified, LLM-ready foundation – consolidate and prepare data so that models can work with it accurately within defined contexts, without hallucinating across unrelated inputs;

- Define governance and security upfront – data privacy policies, access controls, and compliance requirements need to be embedded from the start, not reviewed at the end.

Then Build Agents with Bounded Context

Once the data foundation is in place, agents can be trained on specific, well-defined datasets – becoming reliable experts within their domain rather than general-purpose tools trying to handle everything at once. Those specialized agents can then be combined into multi-agent workflows, where each component does one thing well and passes its output to the next.

This is a meaningful shift from how most pilots are built. A single agent with broad context tends to degrade in quality as complexity grows. A panel of narrow, well-trained agents working together produces more consistent, auditable, and scalable results.

Treat AI as a Program, not a Project

The organizations that move from experimentation to sustained value share one structural trait: they treat AI transformation as an ongoing program with deliberate sequencing, not a collection of isolated initiatives.

That means defining how one initiative feeds into the next. It means establishing who owns outcomes at each stage. It means building feedback loops so that what is learned in a pilot informs the architecture of the next phase. And it means being willing to stop initiatives that do not meet their validation criteria – not as a failure, but as useful data.

The companies that skip this layer – running experiments without connecting enterprise AI strategy – tend to accumulate pilots that never compound into anything. Each one teaches something, but nothing scales. The lesson from the market data and from our own delivery experience is the same: the technology is not the constraint. The operating model around it is.

Scaling an AI Pilot to Production: What the Transition Actually Requires

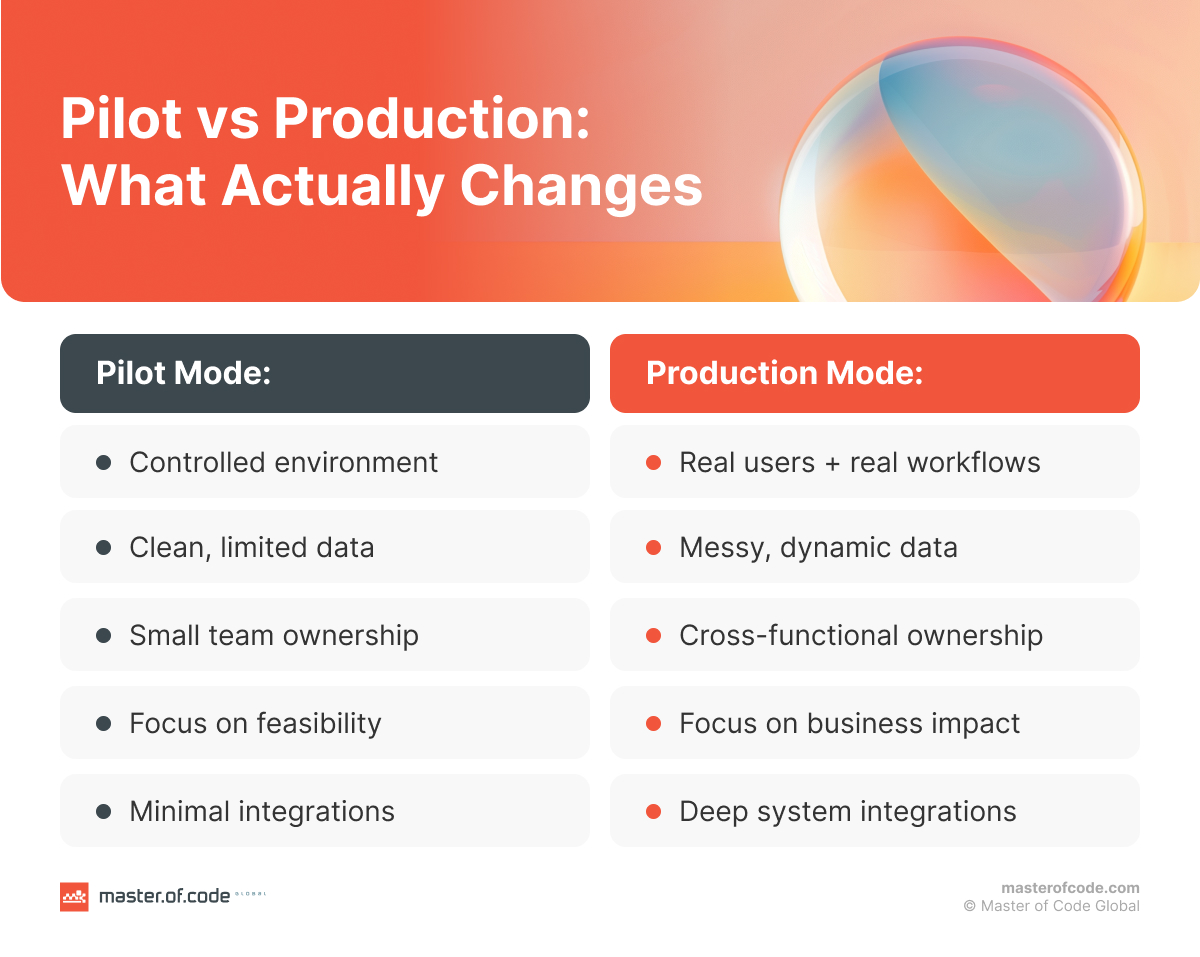

A successful pilot proves that an idea is worth pursuing. It does not prove that the organization is ready to run it at scale. Those are different questions, and conflating them is one of the most common reasons promising AI initiatives stall in the gap between validation and deployment.

Production means something specific. It means the solution runs reliably outside a controlled environment, with real users, real data volumes, and real consequences when something goes wrong. That requires a set of conditions that a pilot, by design, does not need to satisfy.

What the Transition Actually Demands

Moving from pilot to production is not primarily a technical problem. It is an organizational and operational one. The teams that navigate it successfully tend to address the following consistently:

- Defined ownership. Someone needs to be accountable for the solution in production – not just for the build, but for its ongoing performance, iteration, and alignment with goals. Without a named owner who has both business and technical authority, production deployments drift. Issues go unresolved. Adoption stalls.

- Scalable architecture. The technical choices made during a pilot are optimized for speed and learning, not scale. Before moving to production, the architecture needs to be reviewed and documented for the next phase – covering infrastructure, integration points, data pipelines, and any dependencies that will behave differently under load.

- Data infrastructure and MLOps discipline. AI models in production are not static. They degrade as data drifts, use cases evolve, and user behavior changes. A production deployment needs clean, continuously governed data pipelines and a lifecycle management approach that covers monitoring, retraining triggers, and version control.

- Governance and compliance review. Security posture, data access controls, and regulatory compliance need to be validated for production conditions, not just pilot conditions. What was acceptable in a limited test environment may not hold under full deployment – particularly in regulated industries.

- Change management and adoption planning. A working solution that people do not use delivers no value. Adoption planning – including stakeholder communication, user training, and feedback loops – needs to be treated as a delivery requirement, not an afterthought.

- Clear production KPIs. The metrics used to validate the pilot provide a baseline. Production needs its own success criteria that reflect operational reality: uptime, error rate, adoption rate, time saved, and business impact measured against the KPIs defined in Discovery.

The Role of the Pilot in Preparing for This

This is why the structure of the pilot matters so much. An engagement that ends with a working demo and a vague next step leaves the client to figure out the transition on their own. An engagement that ends with a tested solution, instrumented analytics, a documented architecture, and a scoped next-phase roadmap gives the client something to act on.

That distinction is not cosmetic. It determines whether the pilot becomes the foundation of a production system or another entry in a growing list of experiments without scaling AI in business functions.

The goal, ultimately, is not to run better pilots. It is to build the organizational capability to turn validated ideas into operational AI – reliably, repeatedly, with decreasing friction and higher ROI each time.

In the End…

The organizations winning with AI are not the ones running the most experiments. They are the ones that treat experimentation as a structured input to a larger strategy – where pilots are designed to produce decisions, not demos, and where every validated idea has a defined path to production before the build begins.

That requires getting several things right at once: a data foundation that supports reliable behavior, a discovery process that surfaces real risks before they become expensive, a validation approach tied to business outcomes rather than technical feasibility, and an operating model that carries momentum from pilot into production.

None of that happens by accident. It happens by design.

Still have questions, or want a second opinion on your AI strategy? Let’s connect – we have seen enough AI consulting engagements to know that the right conversation at the right stage can save months of trial and error.